ecological change

in Canada

"Wow this is an awesome database! . . . it looks like the records have everything I am interested in."

6 St-Lawrence St

Bishops Mills Oxford Station

Ontario, Canada KOG 1T0

(613) 299-3107

info@fragileinheritance.ca

A History Of The Database

Database Introduction

Database Uses

Screenshot of a sample record

Example of a printed record

Specimen labels

Our database began in 1983 on a Hewlett Packard 9845 computer at the National Museum of Natural

Sciences' Beamish Building when Fred's handwriting became too Darwinian to be interpreted by

curatorial technicians, and he printed catalogue data on file cards for entry into the museum

catalog. This was also our first lesson in the impermanence of computer media, when the

dot-matrix printing subsequently faded on the cards.

With the availability of the portable IBM-clone Hyperion computer in 1984, Fred shifted his

catalogue to a computer listing (as output from BASIC data statements). In the cross-continental

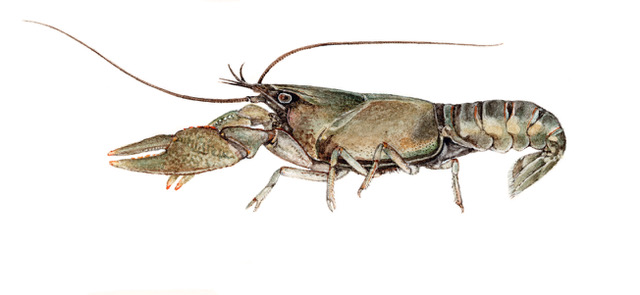

descriptive field work for Fragile Inheritance: a Painter's Ecology of Glaciated North America,

from 1984-1989, we enhanced a text listing of these catalogue entries to form a narrative,

printing from a carbon ribbon onto cotton-fibre paper. In 1990, for the Bruce Peninsula Herp

Survey, with the guidance of Wayne Weller of the Ontario Herpetofaunal Summary, Fred began to

use dBase III+, in a data structure derived from that of the OHS, to record observations, and to

output prose-like species accounts and descriptions of sites from the database. By 1994, we

moved to Foxpro 2.0, the most advanced MS-DOS database application, and to a laser printer

nearly as heavy as a woodstove, inherited from Aleta's editor, Robin Brass.

In 1993 Fred and Aleta began to call themselves the Biological Checklist of the Kemptville Creek

Drainage Basin (BCKCDB), in an attempt to counter the anthropocentric bias of local politics

with an institution centred on the non-human inhabitants of their home area, in the form of an

all-taxon biodiversity database. In 1994 the database switched from dBase to Foxpro 2.0, and the

format changed to hold narrative text and prose renditions of datasheets. Fred wrenched almost

everything he did into formats which permit storage of data in records of this database, notably

the book A Place to "alk: A Naturalist's Journal of the Lake Ontario

Waterfront Trail, (Karstad et al. 1995) largely edited down from database output, and

the report to the Ontario Ministry of Natural Resources on the effects of the 1998 ice storm

(Seburn & Schueler 2000) with a number of additional fields for measurements of snowpack

profiles added to the database structure for analysis, and the additional fields converted to

prose summaries for archiving in the standard format.

The BCKCDB database system was named after Grinnell in 1996, when Fred began to pull the input

and output programs and related database tables into an organized database 'project'. Filtering

and indexing of the database records allowed emulation of the three elements of the Grinnell

system: Journal, Catalog, and Species Accounts.

Our 1997 Workshop on Natural History/Natural Resource Databases and

Mapping at Kemptville College was attended by naturalists, natural scientists, museum

workers, land managers and stewards, natural history database proprietors, resource extraction

managers, landowners, Land Stewardship Council members, Mohawk members of the Eastern Ontario

Model Forest, and representatives of the National Heritage Information Centre and the Canadian

Parks and Wilderness Society. We discussed the use and compatibility of databases in

understanding the ecology, biota, and human occupancy of eastern Ontario.

Starting where the Database Workshop left off, crystallizing around the need for a catalogue for

the newly created Eastern Ontario Biodiversity Museum, funded by the Eastern Ontario Model

Forest and with the help of Anita Miles, we set out to see what would be needed for a

general natural history database. Fred and Anita widely consulted about databases then in use,

and made minor changes to the structure of the database, in anticipation that it would be

central to the museum's curatorial and ecological monitoring activities.

Our interest in narrative, and in prose content and output which must be understandable by the

widest possible audience, has taken us in a more literary direction than is usual for database

managers and has biased us against the cryptic codes and abbreviations that creep into most

database systems.

We attempted to push through "the Willow swamp of inter-tangled 'worldwide'

standards to the Wood Frog chorus of a database structure appropriate for the Eastern

Ontario Biodiversity Museum", without spooking potential users and contributors into

muddy-bottom silence. To this we brought the experience of a single naturalist building a data

structure for his own use that allowed

- the input of natural history records

- partitioning of records as identification of mass collections are made

- output of data to others who request them.

We named the system "EOBase" both as a contraction of 'Eastern Ontario Database', following the

tradition in which the ancestral desktop database program was called 'dBase', and in the hope

that it would represent a dawning of ecological and biotic awareness among the People of Eastern

Ontario.

These are some of the questions we grappled with during the early days of development of EOBase:

- Does the fact that one's database has grown up on its own, in response to the perceived needs of oneself, one's family and community, of other naturalists, and of government agencies, imply that its structure is riddled with hidden meta-data anomalies that will make it useless in a wider context?

- Which of the myriad models for compatibility among such databases are to be taken seriously?

- What's the proper balance between compactness and generality in field names & structure, and fields that specify or code every possible aspect of a record?

- How far is it appropriate to stray from a fully relational structure in order to make records independent of a complex swarm of linked tables?

- Is the desire to make a widely-accessible, widely-contributed-to natural history database a key element in the human population's relationship to other species an elitist pipe-dream or is it an 'eco-centric' populist necessity?

In the decade since the EOBM failed to make the database central to the its curatorial and

ecological monitoring activities, and then dispersed its collection to the New Brunswick Museum, we've continued to

pump our observations and collections into it, and we presently (Feb 2010) have 86,800 records

in the biographical table of the database. In 2006-2008 Matt Keevil curated Wayne Grimm's land

snail collections into the database for deposit in the Canadian Museum of Nature, a total of 3000 records.

When it comes to database programming, naturalists tend to learn the technology they need to

know in order to do what they need to do, and leave it at that. One of the leading experts in

North American land snails still boots up the blue screen of dBase III+, and that does what he

needs it to do for him.

In 2009 Fred tried to wrench the database into the more advanced Visual Foxpro, but he found

this object-oriented language so thick with options about the appearance of input forms; and

limitations on what goes on that are based on the presumption that one is operating in a

network; and relations, indices, and input conventions that are in the database structure rather

than written out in code, that he didn't make any net progress. After consultation with Steven

Black, of http://stevenblack.com we've

abandoned the conversion of the database to a more complex platform, and are concentrating on

getting Foxpro 2.6 running on all our computers, writing routines for on-the-road data entry,

and preparing for field work.

As we do this we're looking towards making the database into an application which others can

easily use for recording, managing, compiling and extracting their natural history observations.

if anyone is interested in working with us on this, please contact info@fragileinheritance.org.

The fields of EOBase are given in http://pinicola.ca/h2001a.htm#table

Database Introduction

Database Uses

Screenshot of a sample record

Example of a printed record

Specimen labels

There is no other independent organized group in Canada which is dedicated to promoting long term monitoring. This is your chance to support the work of Fragile Inheritance.

lavishly illustrated with watercolours and

descriptive prose from sea to sea

drawing the baseline for

a legacy of beauty and

change - Aleta Karstad

and Frederick W .

Schueler's life work